# Project

A Cycloid project view is composed of several tabs. Apart from the Pipelines tab which is described in more details here and the InfraView tab described here, several tabs can be configured and are explained later in this page.

# Environments

# Introduction

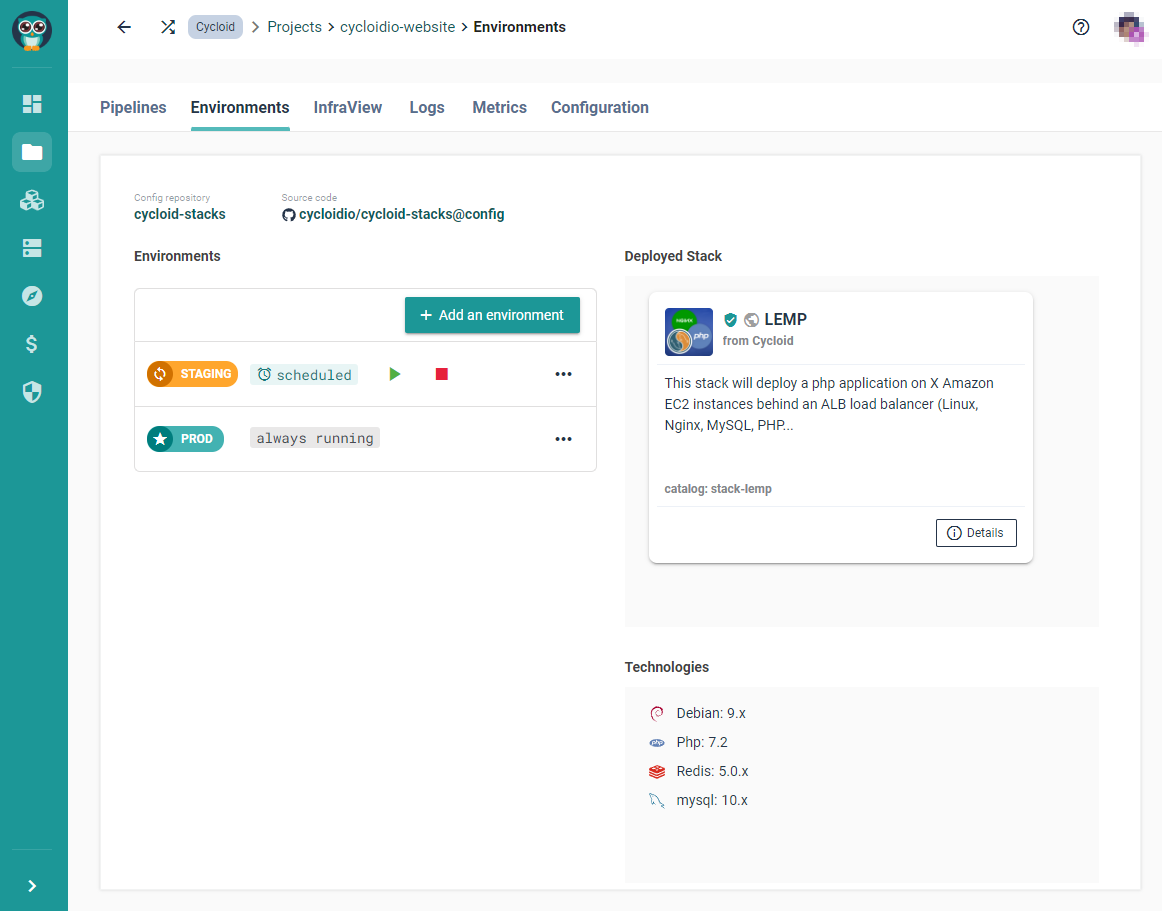

The environments tab will provide insight about a couple of elements of your project:

- Which config repository has been used? (Config Repository)

- Is auto start/stop enabled? (Environments)

- What you have installed? (Deployed Stack)

- Which version is it running? (Technologies)

On this section you'll be able not only to have a summary of what has been setup, but also to configure the automatic start and stop time for one or all of your environments. For example here is the environment view for our website (opens new window).

# Config Repository

If there is a config repository linked to the project, its details will be visible on the top of the environments section. There you will be able to see details like: config repository name and git url. By clicking on the config repository name you will be redirected to the config repository details page, whilst clicking the URL you will be redirected to the git repository itself.

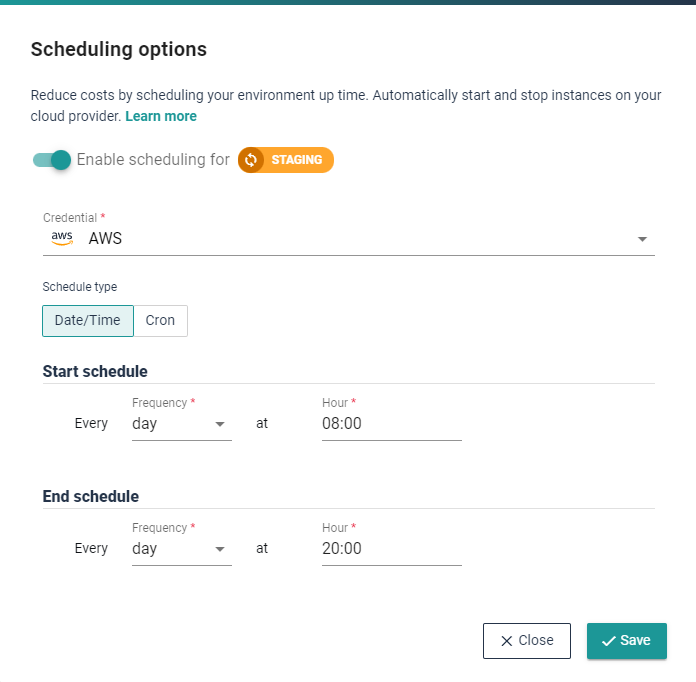

# Start and stop environments

To enable automatic start and stop of an environment, click on the 'Schedule' button for the selected environment as shown below.

Through the 'Schedule' option you can either configure the start & stop timings - with frequency and preferred times - or insert directly a cron format, by clicking on

the 'Cron' tab.

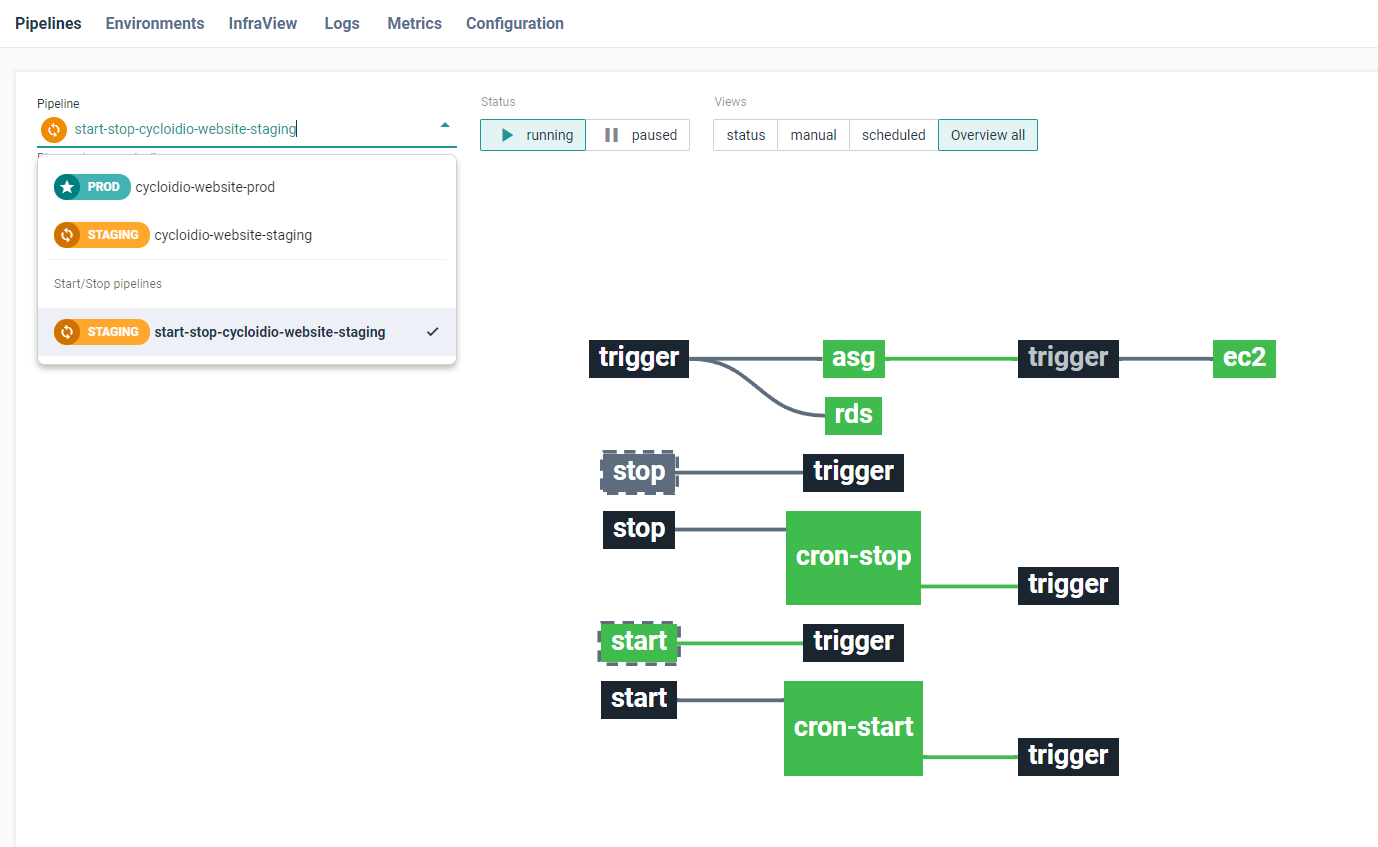

Once the configuration seems valid to you, click on save. This operation results in creating a specific pipeline set up with the designed schedule; you can also access it on the pipeline tab, whether to see its status or manually trigger it:

# Deployed Stack

The deployed stack box shows the stack that has been installed from the Catalog Repositories - whether it is a public or private one. It provides very basic information on the name, the function, the author, etc.

# Technologies

This is a simple gathering of what the stack has defined inside. It has been written with certain versions of softwares (OS, services, AWS services, etc), and tries to sum-up all the interesting one there.

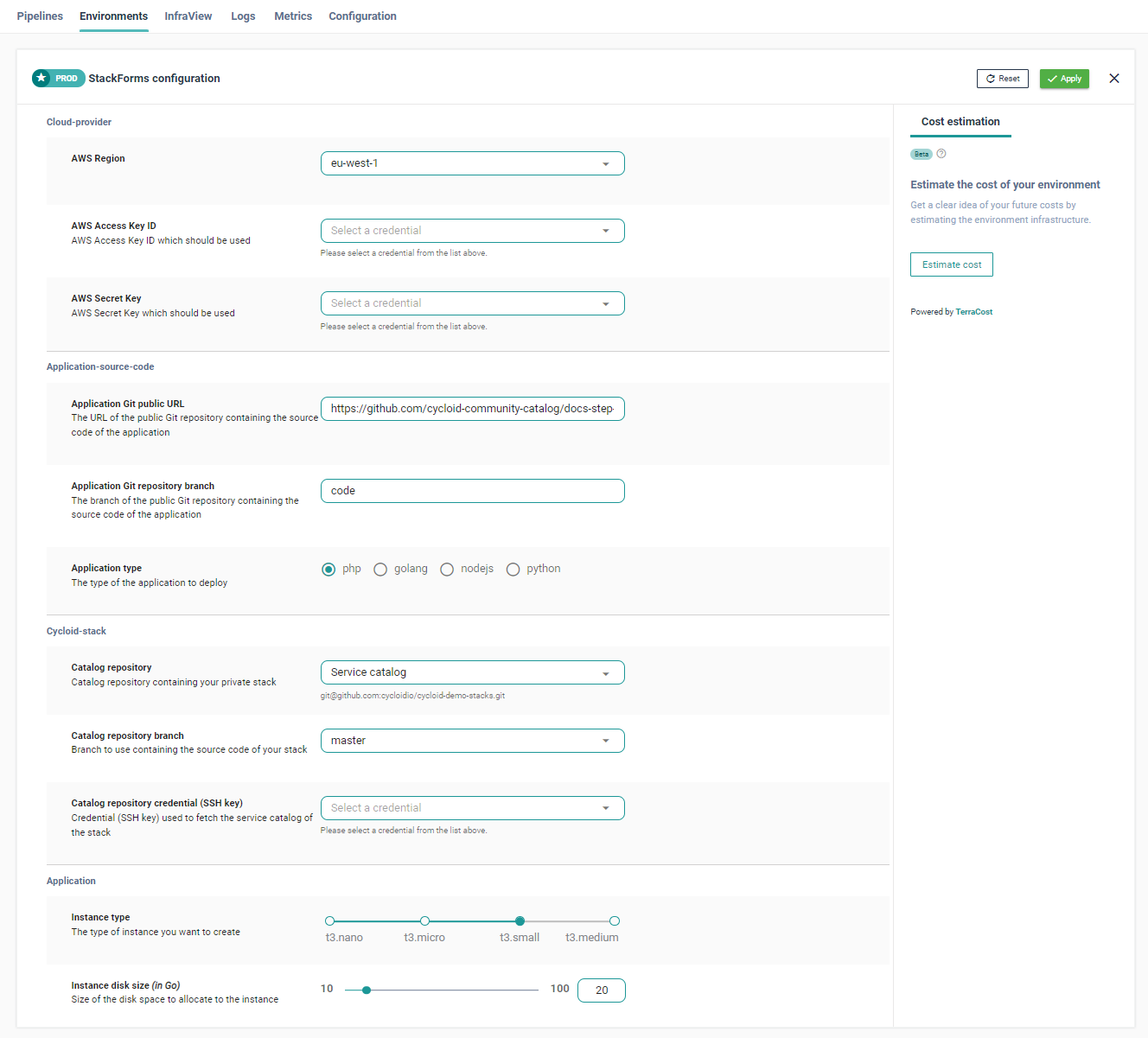

# Stackform

Here's a sample of how it could look:

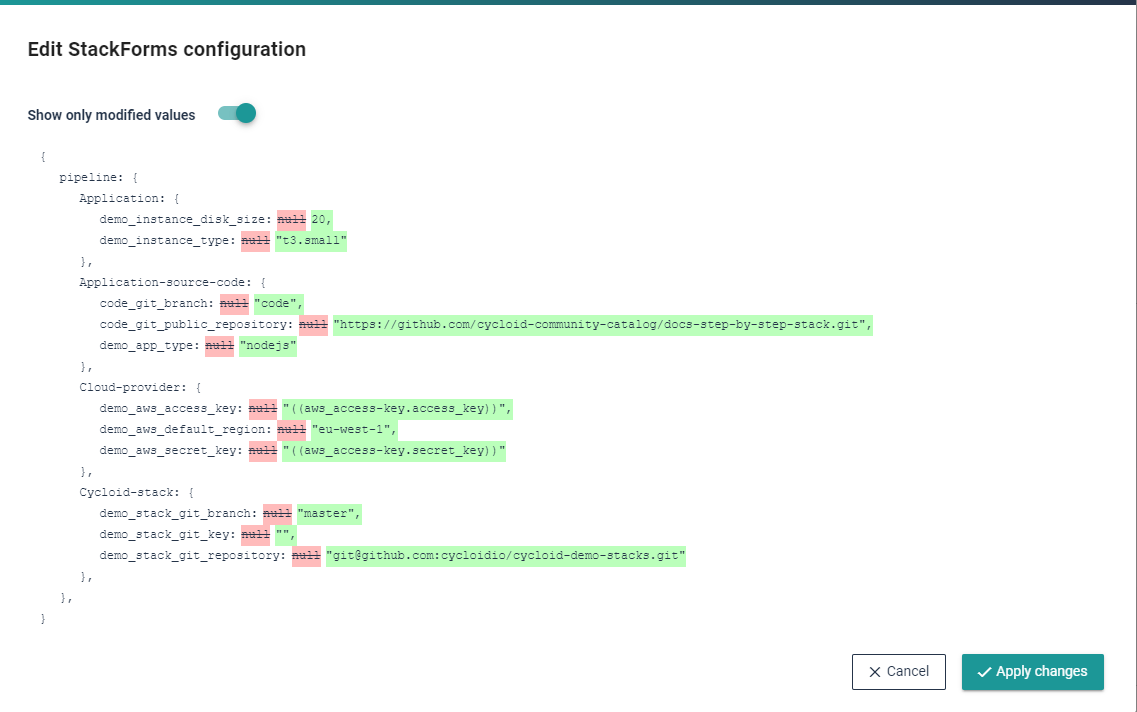

Once the project is created you can edit it via the environments tab, which will show a similar page that the creation one:

When your changes are done click on 'Apply': a modal window will display all the changes that are about to be applied. Click again on 'Apply changes' when done.

Please not this might take a bit of time as files need to be regenerated and push into the project's config repository.

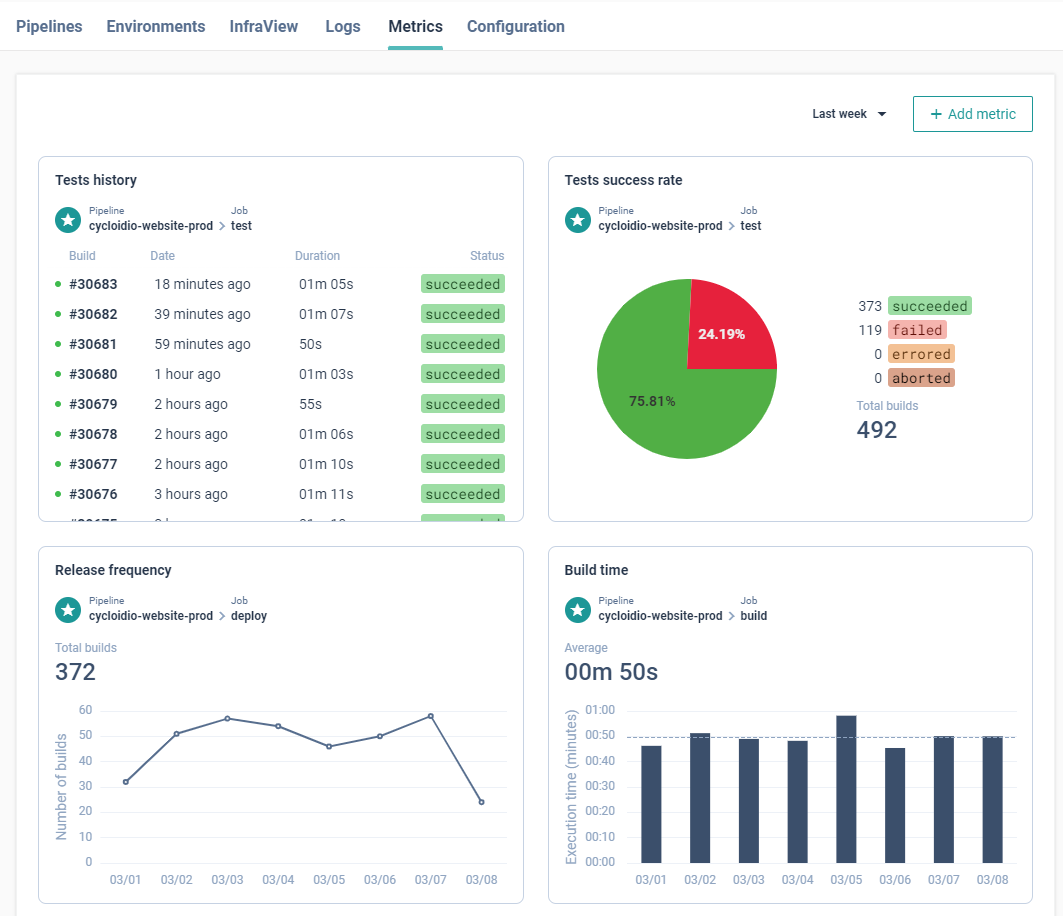

# Metrics

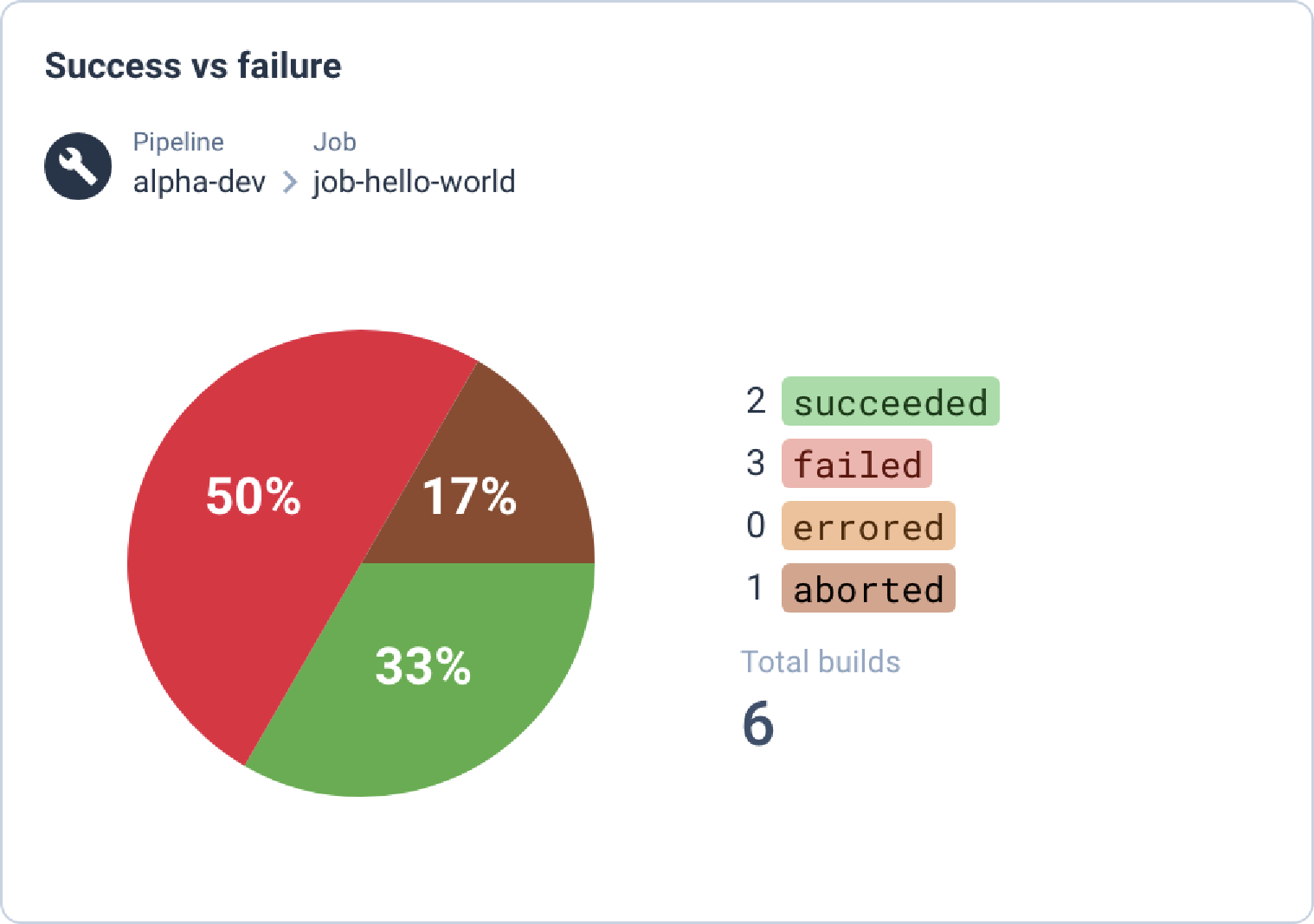

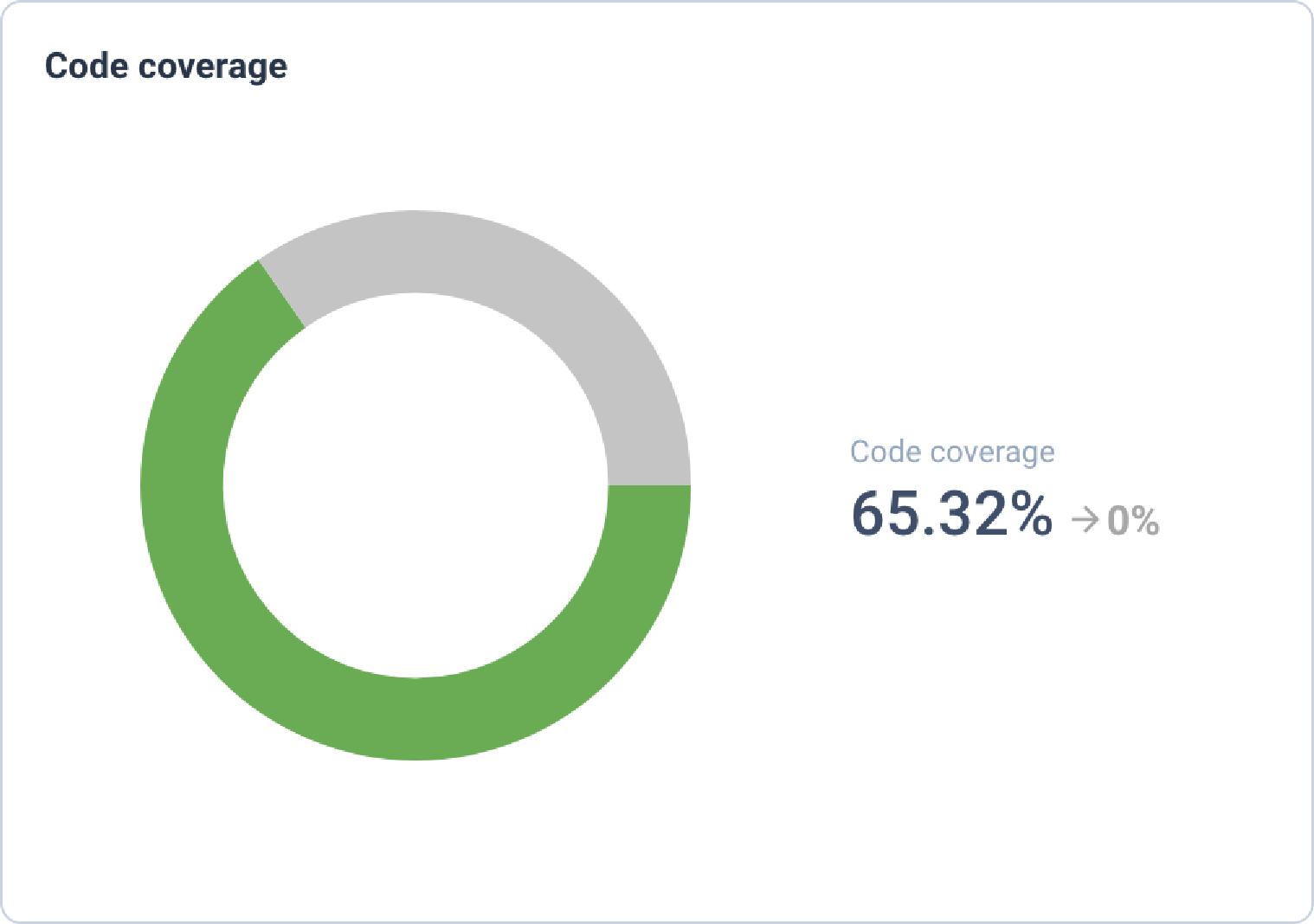

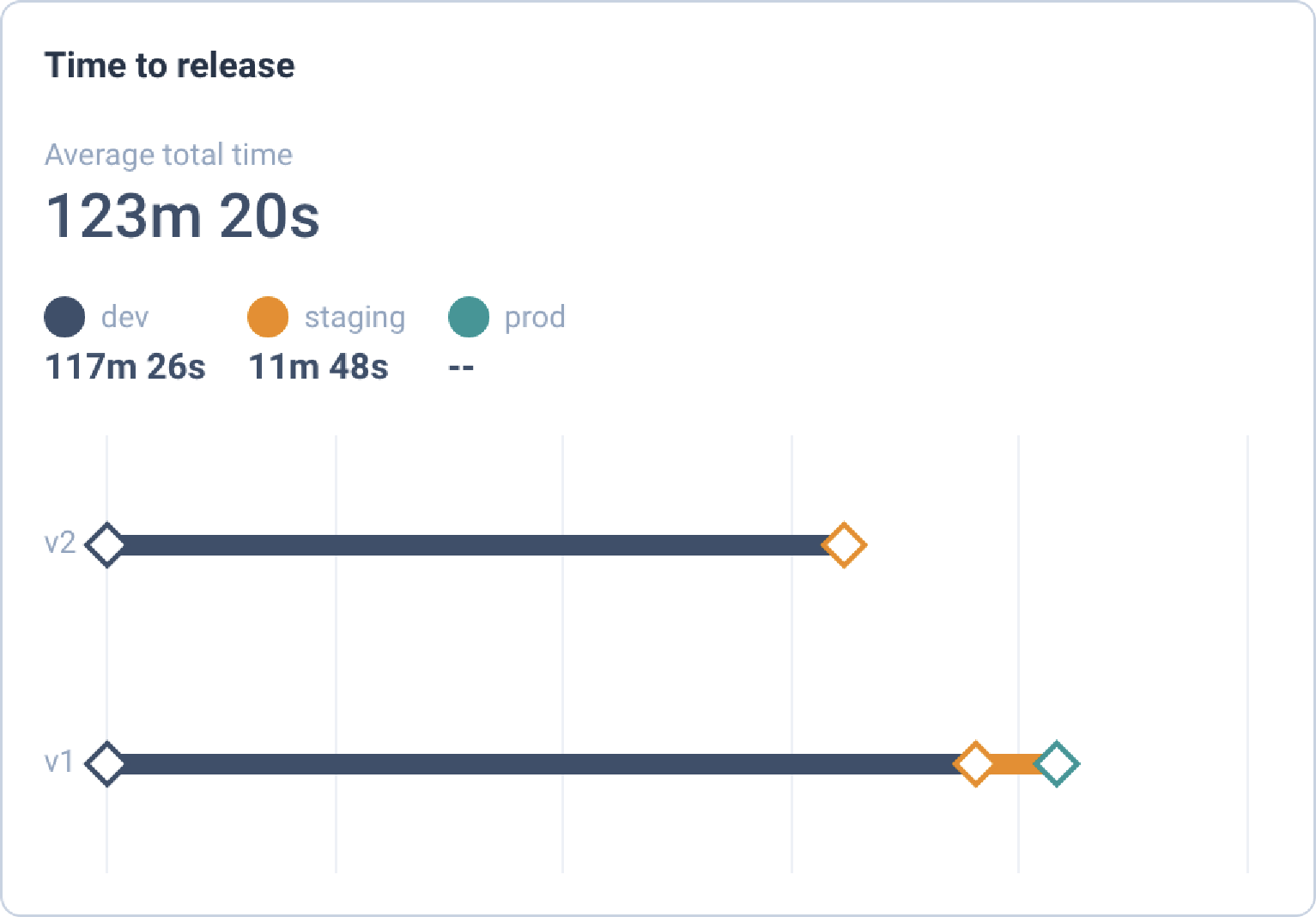

In the Metric tab you can configure Key Performance Indicator or KPIs in order to provide interesting information regarding your pipelines, jobs, deployment, code coverage, etc. Please note that this feature is still at its early stage but more will come soon!

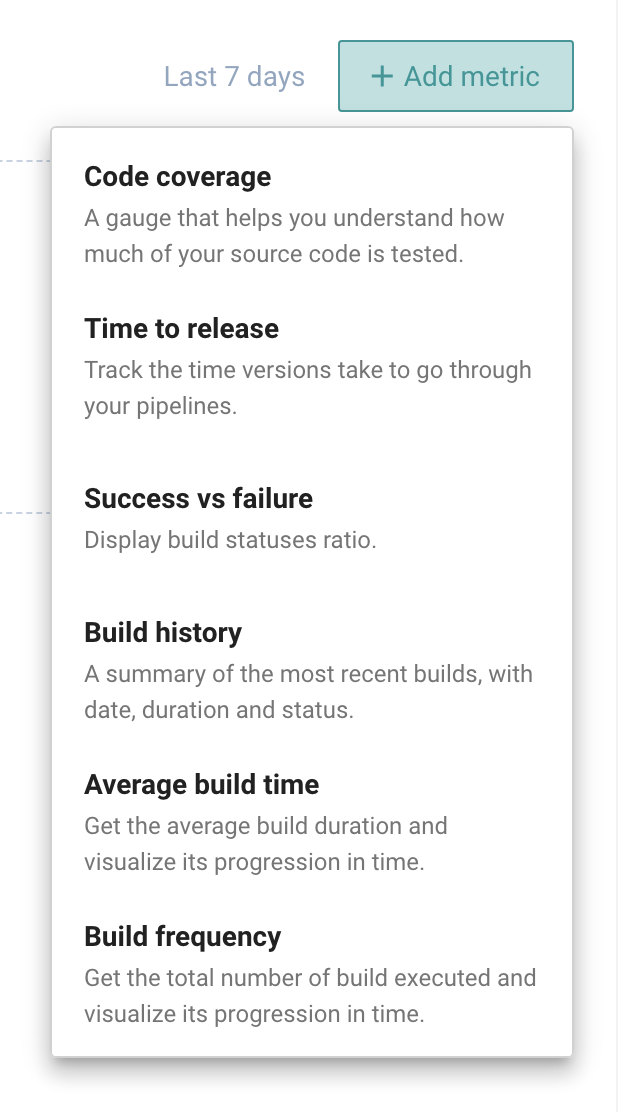

# Menu

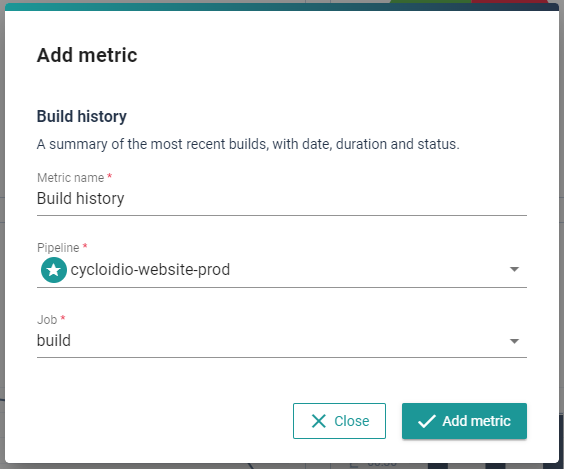

To start configuring them, go into the project's section and click Add metric, you'll then have a list of possible KPIs as well as specific popup once you clicked on the desired one.

# List of available KPIs

There are currently 5 available KPIs gathered in to group types: concourse & events ones.

| Name | Group | Description |

|---|---|---|

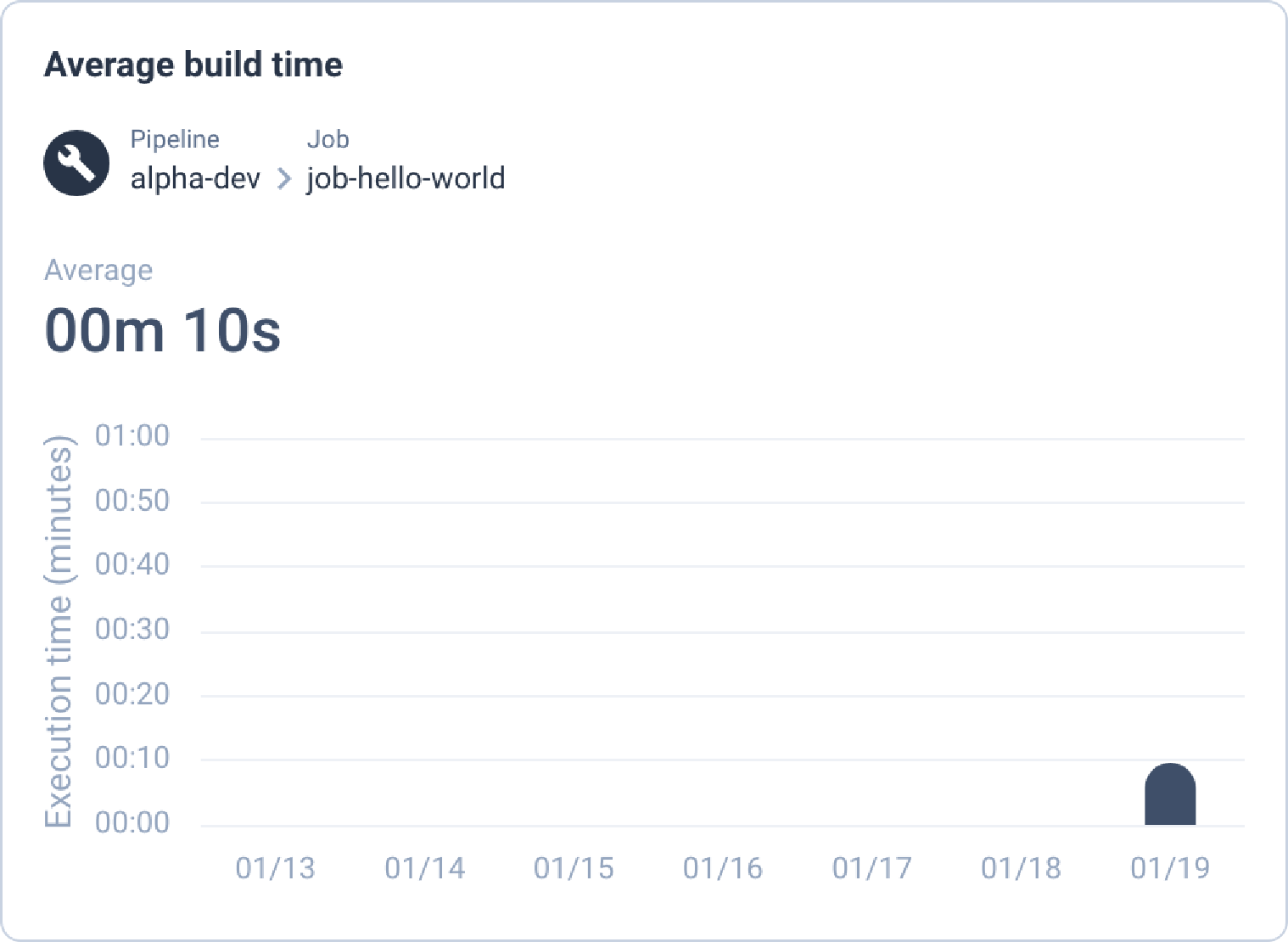

| Build average time | concourse | gives insight on the average build time of a job |

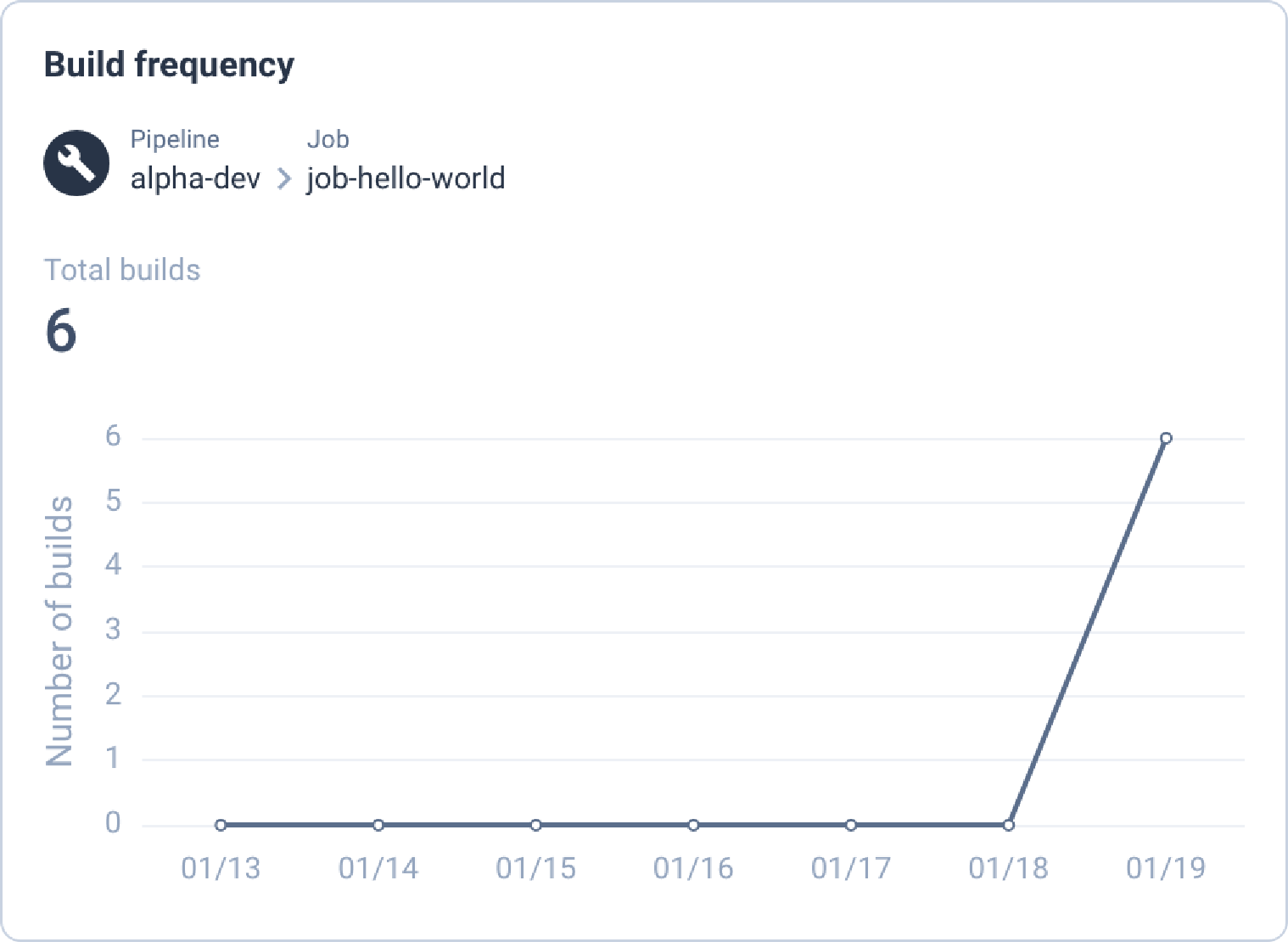

| Build frequency | concourse | shows how frequently a job is being triggered |

| Build history | concourse | provides longer job history and better representation |

| Code coverage | events | shows code's coverage as well as evolution |

| Time to release | events | indicates how long releases spend in each environment |

Notes

You might see more KPIs on the page when configuring them, because some are split into different ones depending on the widget used for each. See more details in the next sections.

# Group types

As previously mentioned some KPIs are based on concourse, while some are based on events. The one based on concourse simply need a configuration and will work out of the box afterwards. The events KPIs require not only a configuration, but also to send those events to Cycloid in order to build data.

# Concourse

Currently to configure Concourse's KPI you need to provide [LINK TO API] all elements: project, environment, pipeline & job's name. However depending on the KPI you might or might not be able to select different widget.

# Configuration

| Name | Type | Widgets allowed | Scope |

|---|---|---|---|

| Build average time | build_avg_time | bars | project, environment, pipeline, job |

| Build frequency | build_frequency | line | project, environment, pipeline, job |

| Build history | build_history | history, pie | project, environment, pipeline, job |

Here are some examples on how you could configure KPIs via cycloid's CLI (opens new window):

cy kpi create --name "Job test history" --org test --type build_history --widget history --project test --env test --job test

cy kpi create --name "Time to release" --org test --type time_to_release --widget line --project test --config '{"envs": ["dev", "staging", "prod"]}'

2

# Events

Tip

If you're unfamiliar with Cycloid events, take a look at our events page to check how you cand send events through your pipelines. You can also check our CLI (opens new window), if you prefer to do that from scripts or from your favorite shell.

For the events based KPI, you need to configure them, and then to modify your pipelines/scripts to include the generation/sending of such events. There are currently 2 types of KPIs using events:

| Name | Type | Widgets allowed | Scope | Config |

|---|---|---|---|---|

| Code coverage | code_coverage | doughnut | project | |

| Time to release | time_to_release | line | project | {"envs": ["env1", "env2", "env3"]} |

The Time to release configuration corresponds to the order of environments, from the first to the latest.

For example, if you have 3 environments: production, preproduction and development, you'd set the configuration to: development, preproduction, production.

Which indicates the branch order that your features are going through, which will help to identify extra time spent at every stage.

Please note, that the version is irrelevant to establish if a version has been released or not. That is because a version could be anything: version, timestamp, tags, commit id, etc.

Instead the chronology is used to be able to identify if something has been deployed or not. This is why if you have in your list of events:

- dev - v1 at t

- dev - v2 at t+1

- prod - v1 at t+2

The dev v1 and v2 will be considered both released, because the deployment of prod came after (no matter the version number). However if you had the event of prod v1 deployment before dev v2 deployment, then dev v2 wouldn't be considered released.

# Formats

In order to be properly consumed by the KPIs, events have to respect a certain JSON format:

| Type | Body | Tags |

|---|---|---|

| Code coverage | {"coverage": 42.001} | [{"key": "kpi", "value": "code_coverage"}, {"key": "project" "value": "<name>"}] |

| Time to release | {"env": "<name>", "version": "<v>"} | [{"key": "kpi", "value": "time_to_release"}, {"key": "project", "value": "<name>"}] |

Important

Please ensure the body/tags are valid JSON either with an online JSON validator or using some CLI commands.

Here are some examples on how you could create those events via cycloid's CLI:

cy --org test event create --tag kpi=time_to_release --title "Release" --tag project=test --message '{"env": "dev", "version": "v1"}'

cy --org test event create --tag kpi=code_coverage --title "Coverage" --tag project=test --message '{"coverage": 60.10}'

2

# Look

| KPI | Widget | Render |

|---|---|---|

| build_avg_time | bars |  |

| build_frequency | line |  |

| build_history | history |  |

| build_history | pie |  |

| code_coverage | doughnut |  |

| time_to_release | line |  |

# Frequent Questions

# I've configured my KPIs but the data is empty, what can I do?

If the KPIs configured are based on events, you have to start sending events to feed the KPI. If the KPIs configured are based on concourse, then the data is imported every day.

# Can I change the time range?

Not yet, but we are planning to add this soon!

# I cannot update my KPI's project or environment configuration is it normal?

Yes, this is normal because the data has to match the KPI's configuration. So in order not to break that link, the configuration cannot be edited once done.

# Can I aggregate similar data together?

Not at this moment, but this is something we are working on!

# Are you planning to integrate those KPI as part of your dashboards?

Yes!

# Could we have different dashboards/KPIs depending on the profile?

Perhaps, we are still investigating how to implement the dashboard in the most efficient way.

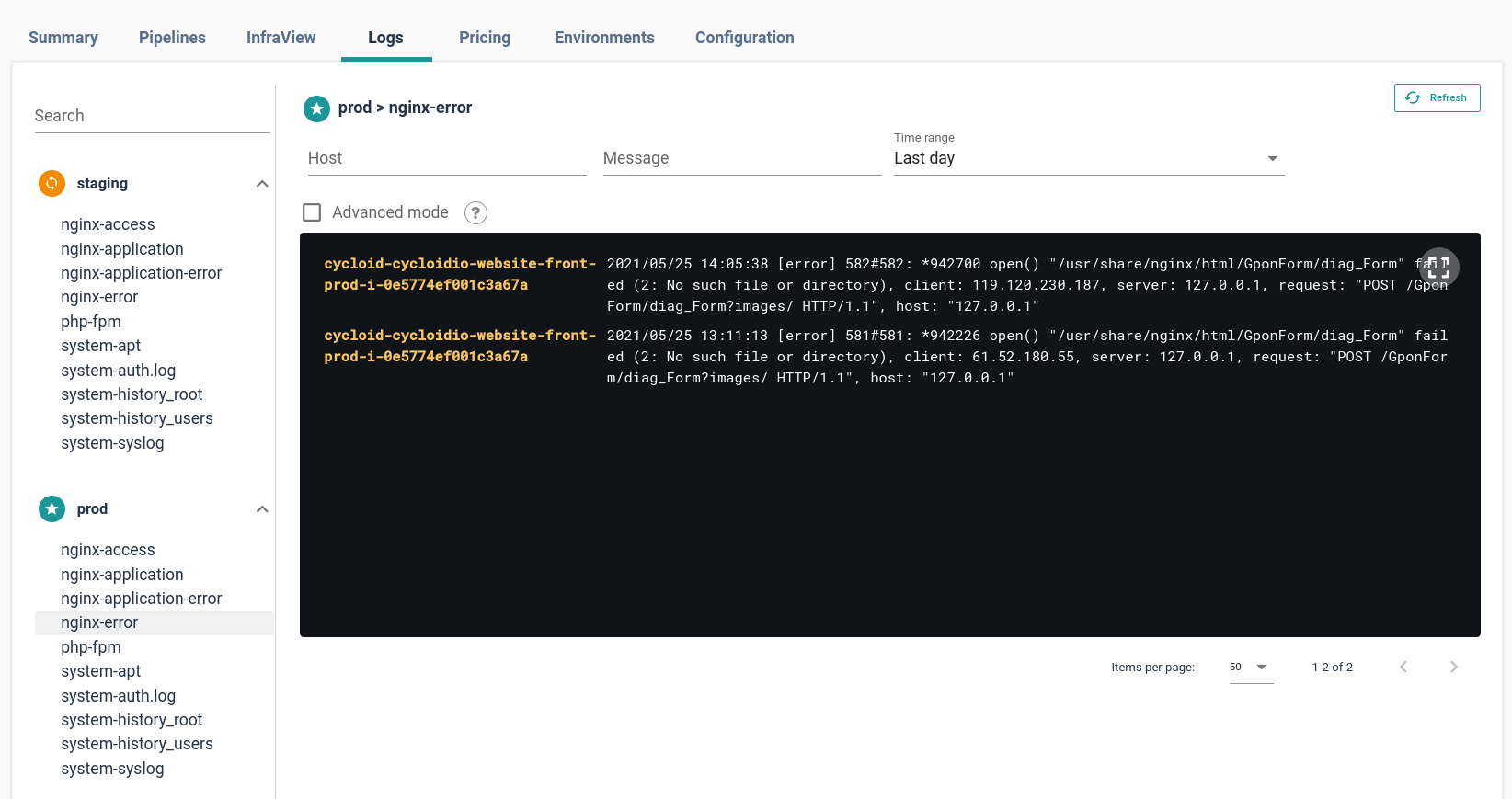

# Logs

Applications and infrastructure logs can be displayed in Cycloid, offering a handy and centralized way to know what is going on in your application after it has been deployed.

Cycloid logs allow you to display logs of all your servers for all your environments in user-defined groups. Filter and search can be applied on logs entries to find a specific pattern or server.

If you have multiple environments, all environments will be displayed on the left-hand side of the page, and each of them will contain all log groups found.

Cycloid Logs is compatible with two backends:

To use it simply configure it for your project in Cycloid console as described in the dedicated configuration section below.

note

You will also need to make sure your logs are sent into one of the Cycloid compatible backends. This can be done directly from your application or by using, for example, our [Cycloid Ansible Fluentd role] to send your logs directly to ElasticSearch.

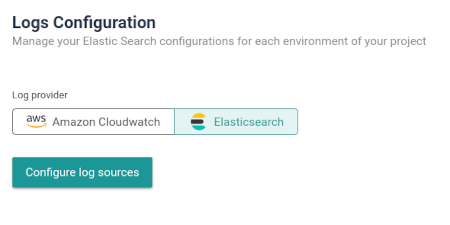

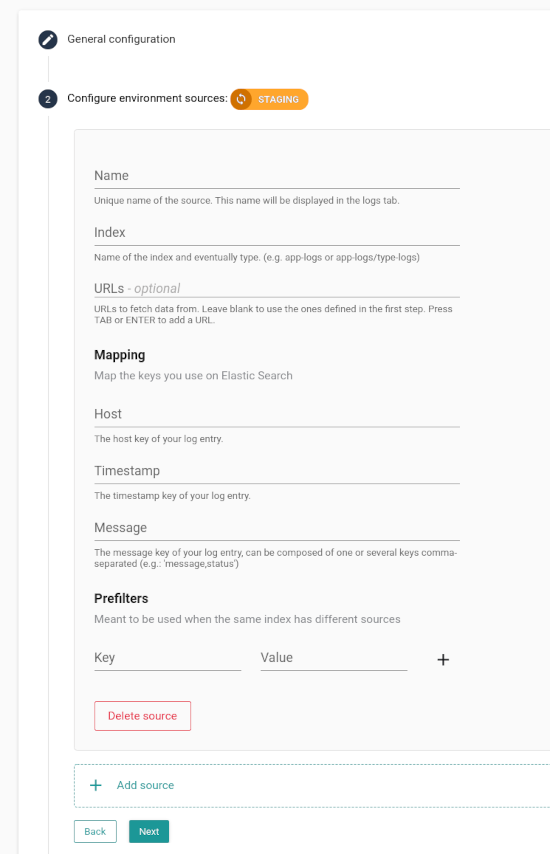

# Configure for ElasticSearch

Under your project, click on the Configuration tab, then follow instructions in the Logs Configuration section:

- Select ElasticSearch

- Then configure the access and mapping for each ElasticSearch environment source that must be used to search for logs entries

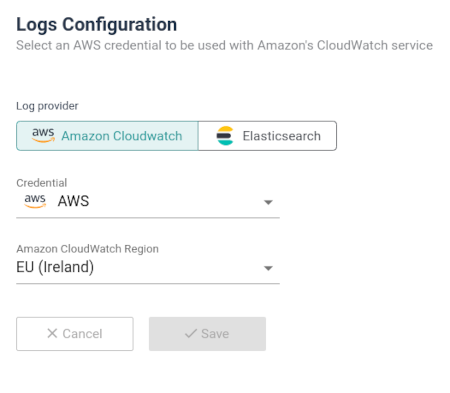

# Configure for AWS Cloudwatch logs

Under your project, click on the Configuration tab, then follow instructions in the Logs Configuration section:

Logs are directly fetched from AWS CloudWatch (opens new window), a filter is applied on Log Groups (opens new window) to display only the relevant ones based on the project and environment names of your stack. The Cycloid console will search them using the following format:

$PROJECT_$ENV

Any other Log Groups that are not matching the format will not be fetched.

By default no Log groups nor Log Streams are created for you; this is up to you to create and feed them with the logs from your systems, jobs, applications, etc. You can use services like Fluentd or Logstash to help you with the formatting of logs intended to be sent in AWS Cloudwatch.

# Usage

By default Cycloid already provides some events, but you can also extend it to your pipelines using the event resource (opens new window) that we wrote for this purpose. This resource allows you to build and send custom events from a pipeline.

Here is a full example of the configuration and the usage of Cycloid events in a pipeline :

resource_types:

- name: cycloid-events

type: docker-image

source:

repository: cycloid/cycloid-events-resource

tag: latest

resources:

# Configure global Cycloid events resource

- name: cycloid-events

type: cycloid-events

icon: calendar

source:

api_key: ((api_key))

api_url: ((api_url))

icon: fa-code-branch

organization: ((customer))

severity: info

type: Custom

tags:

- key: project

value: ((project))

- key: env

value: ((env))

- name: myJob

plan:

- do:

...

# Send a specific Cycloid event in case of success or failure of my job

on_success:

do:

- put: cycloid-events

params:

severity: info

message: |

The project ((project)) on ((env)) environment have been deployed

title: Success deployment of ((project)) on ((env)) environment

on_failure:

do:

- put: cycloid-events

params:

severity: info

message: |

Deployment fail for ((project)) on ((env))

title: Fail deployment of ((project)) on ((env)) environment

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

You can also send yourself events using the Cycloid's API. Feel free to have a look at the event resource (opens new window) implementation to guide you through the structure of the events, and possible limitations of fields.

See an event in the JSON format:

{

"title": "title of the event",

"message": "some useful message here",

"icon": "<icon>",

"tags": [

{ "key": "foo", "value": "bar" },

{ "key": "foo2", "value": "bar2" }

],

"severity": "<severity>",

"type": "<type>"

}

2

3

4

5

6

7

8

9

10

11

# Colors

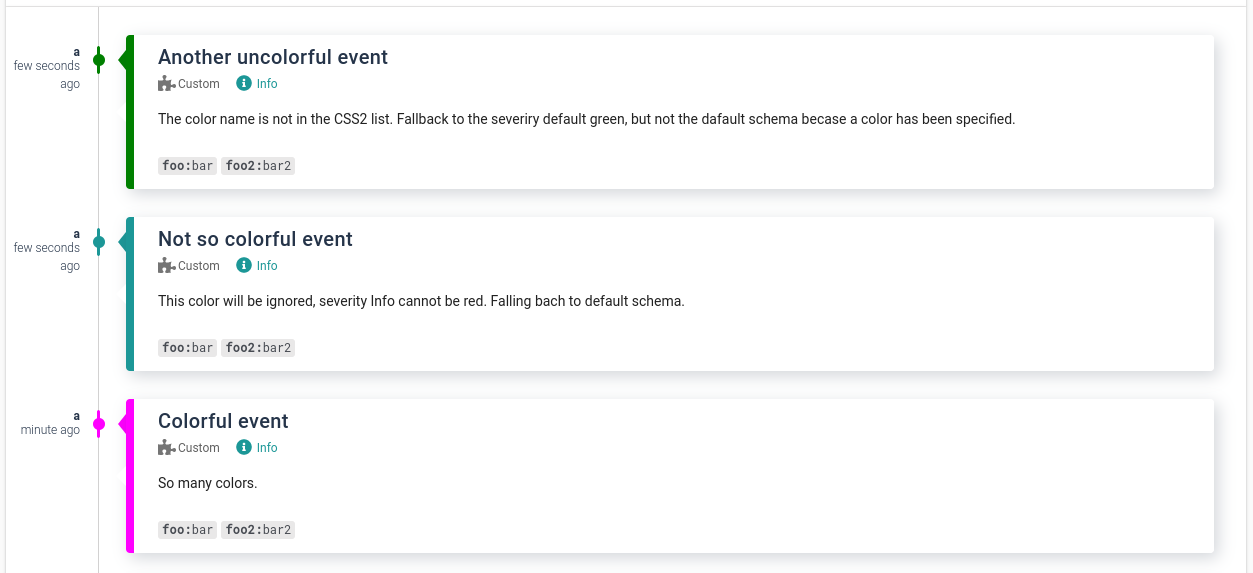

User generated events may have custom colors, as you can see in the examples below, but there are some restrictions.

- The available colors are the ones defined by the keyword in the CSS 2 specs (opens new window): aqua, black, blue, fuchsia, gray, green, lime, maroon, navy, olive, orange, purple, red, silver, teal, white, and yellow.

- RGB values are not accepted.

- The colors

red,orange, andgreen, are reserved respectively for the following severity:CritandErr,Warn, andInfo. If one of these three colors is specified and does not match the severity it will be ignored to not create confusing situation, such as a green colored error event. - If a color is specified but not in the allowed list, it will default to the color for the severity:

red,orangeorgreen, but not to the default cycloid color scheme. This allows to easily identify erroneous color specification.

An event without a color specified or ignored will fall back to the default color scheme.

For example here are some events:

{

"title": "Colorful event",

"message": "So many colors.",

"tags": [

{ "key": "foo", "value": "bar" },

{ "key": "foo2", "value": "bar2" }

],

"severity": "Info",

"type": "Custom",

"color": "fucsia"

}

2

3

4

5

6

7

8

9

10

11

{

"title": "Not so colorful event",

"message": "This color will be ignored, severity Info cannot be red. Falling bach to default schema.",

"tags": [

{ "key": "foo", "value": "bar" },

{ "key": "foo2", "value": "bar2" }

],

"type": "Custom",

"severity": "Info",

"color": "red"

}

2

3

4

5

6

7

8

9

10

11

{

"title": "Another uncolored event",

"message": "The color name is not in the CSS2 list. Fallback to the severity default green, but not the default schema because a color has been specified.",

"tags": [

{ "key": "foo", "value": "bar" },

{ "key": "foo2", "value": "bar2" }

],

"type": "Custom",

"severity": "Info",

"color": "CornflowerBlue"

}

2

3

4

5

6

7

8

9

10

11

Creating the above events will have the following result, note that the events are displayed in revers order of creation with the most recent on top: